Evaluating Conversational Implicatures in Large Language Models

Recent progress in large language models (LLMs) raises new challenges for evaluating their reasoning and pragmatic abilities. Standard benchmarks—often based on multiple-choice formats—tend to overlook pragmatic subtleties, making it difficult to assess how LLMs diverge from human language processing. This talk addresses these gaps by systematically testing LLMs on conversational implicatures, a core domain of pragmatic inference grounded in Grice’s Cooperative Principle.

We design a fine-grained evaluation across eight implicature types, including particularized and generalized implicatures, scalar implicatures, bridging, indirect speech acts, and others. To minimize training overlap, we created a fully handcrafted dataset of 708 stimuli. Model performance is compared with human judgments collected via Prolific.

Our analysis combines three evaluation methods—multiple-choice QA, probability-based scoring, and open-ended evaluation—allowing us to probe both accuracy and interpretive strategies. Results show where LLMs succeed, where they diverge from human inference, and what these findings imply for the broader debate on whether pragmatic reasoning is uniquely human and how existing pragmatic theories may need to be revisited.

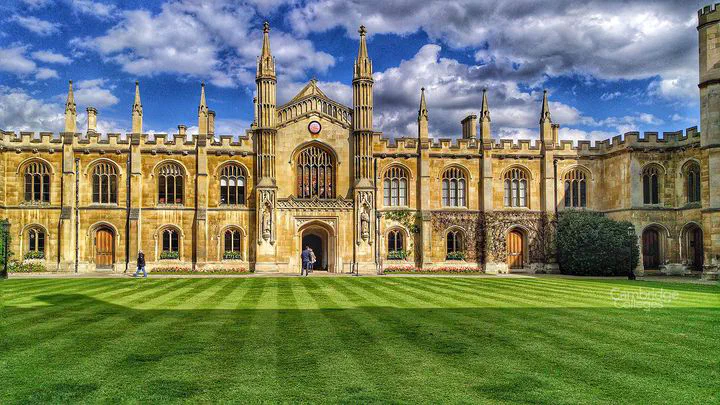

University of Cambridge